611 Highlights in R (Part II)

The raw .R file is here and the Rmd file is here.

Brief ‘Hello’ to R Markdown

Let me first introduce you to R markdown and kitr. This format allows you to integrate your analyses with text that can be automatically translated to html, word, and pdf formats. I just produced a supplementary materials document using this and it probably saved me a ridiculous number of hours. It’s easy to get started:

- Save your file with extension .Rmd.

-

Place something like the following in the very top of your file:

--- author: John Flournoy title: 611 Highlights in R (Part II) output: html_document --- -

Write as you normally would, using Markdown syntax for formatting. For example:

# This is a big heading 1. this is 2. a numbered list - this is a bulleted list - With **bold** and *italic* text -

When you want to throw in some R code, place it inside an R chunk by wrapping it in

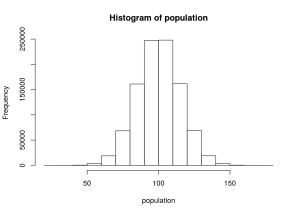

```{r}at the beginning and```at the end. When you do, it comes out something like this:# From Rose's presentation: # a toy data set for us to work with n <- 100 gender <- as.factor(c(rep("M", n/2), rep("F", n/2))) IQ <- rnorm(n, mean=100, sd=15) degree <- rep(c("HS", "BA", "MS", "PhD"), n/4) height <- as.numeric(gender)-2 + rnorm(n, mean=5.5, sd=1) RT1 <- rchisq(n, 4) RT2 <- rchisq(n, 4) DV <- 50 - 5*(as.numeric(gender)-1) + .1*IQ + rnorm(n) df <- data.frame(awesome=DV, gender=gender, IQ=IQ, degree=degree, height=height, RT1=RT1, RT2=RT2) head(df)## awesome gender IQ degree height RT1 RT2 ## 1 52.89486 M 79.40263 HS 6.292706 1.761990 2.228117 ## 2 57.04782 M 96.31289 BA 4.759042 2.236027 7.363253 ## 3 55.59594 M 104.95331 MS 5.560292 5.159114 7.126281 ## 4 55.44397 M 84.23347 PhD 5.354595 7.652900 2.836956 ## 5 55.45191 M 107.38754 HS 4.523997 7.768904 4.504664 ## 6 54.70468 M 110.07096 BA 6.139368 7.786462 7.508488

Notice that in 4, the we see the output of the command head(df). This is useful for outputting tables and plots, as we’ll see.

Here are more resources on

Streamlined Data Workflow with dplyr

Download the data here:

Make sure you have the package (library(dplyr)), and if you don’t, install it (install.packages('dplyr')). Here’s an intro to dplyr straight from the horse’s (Hadley’s) mouth.

By way of data manipulation that I’m actually using for a real project, this tutorial will introduce you to

%>%- a function chaining operator

mutateandtransmute- adds new variables and preserves existing (

transmutedrops existing variables)

- adds new variables and preserves existing (

mutate_each- adds new variables, applying functions in

funs(...)to each column

- adds new variables, applying functions in

group_by- groups data set according to some variable (e.g., gender) – other

dplyroperations will be done on groups

- groups data set according to some variable (e.g., gender) – other

summarise- summarizes multiple values to a single value

left_join- joins two data frames, returning all rows from x, and all columns from x and y

select- keeps the variables/columns that you mention (see

?selectfor special functions that help you select variables)

- keeps the variables/columns that you mention (see

filter- returns rows with matching conditions (similar to selecting columns like:

aDataFrame[variable == value,])

- returns rows with matching conditions (similar to selecting columns like:

group_by- groups data set according to some variable (e.g., gender) – other

dplyroperations will be done on groups

- groups data set according to some variable (e.g., gender) – other

summarise- summarizes multiple values to a single value

do- use do to perform arbitrary computation, returning either a data frame or arbitrary objects which will be stored in a list (e.g., run the same

lmort.testseparately for each gender, country)

- use do to perform arbitrary computation, returning either a data frame or arbitrary objects which will be stored in a list (e.g., run the same