Next week we’ll have another consultation about analyzing breaks in time series data (i.e. when you have a long string of repeated measures, how can you tell when a participant “breaks” from his/her pattern?). We’d love to consider a range of approaches, from basic time series models like ARIMA through more flexible multilevel modeling approaches (which may actually make the most sense, because the data are nested). To see what time series stuff we cover in 612, see this rpubs document (the second half covers autocorrelation and times series). If you want to get in the mood, go to Naomi’s brown bag talk on Friday (tomorrow) 12pm-1pm in Straub 256. She’ll be talking about an MLM approach for these data.

Week 6 will be map day! Yes, maps, like for finding treasure. Except these maps won’t be about treasure so much as outreach and recruitment… There are so many great mapping packages in R! I did a little mapping stuff last year for a class in library sciences, so I jotted down some code real quick to give you an idea of how some of this could work:

# let's say we posted flyers at prince pucklers, moss street day care, and peace health hospital

# and let's say two families told us they saw flyers at moss street

library(ggmap)

library(ggplot2)

flyer1 = geocode("moss street day care eugene or") # this is literally a google maps search!

flyer2 = geocode("1605 E 19th Ave Eugene, OR 97403") # this is the address for prince pucklers

flyer3 = geocode("peace health hospital eugene or")

family1 = flyer1

family2 = flyer1

map.data = rbind(flyer1, flyer2, flyer3, family1, family2)

map.data$type = c(rep("flyer", 3), rep("family", 2))

map.data

base.map = qmap("eugene, or", zoom=14, maptype="roadmap") # get a map of eugene (again, this is just a google map search)

map = base.map +

geom_point(data = map.data, aes(x = lon, y = lat, color=type, shape=type), size=8, alpha=0.5) # add the flyer and family info

qmap("university of oregon", zoom=14, maptype="watercolor") # just because :)

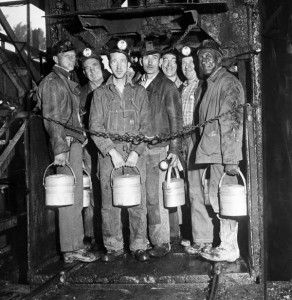

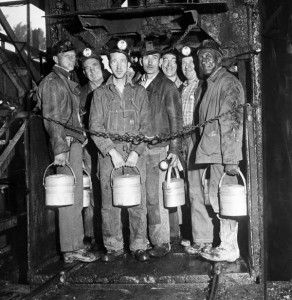

And for week 7, we’re going to talk about model selection in machine learning/statistical learning/data mining/whatever you want to call it. Into the data mines!