News!

Our paper on cell types of the octopus visual system is now out at Current Biology!

Check out coverage from University of Oregon here.

Two papers on new approaches to studying vision in natural contexts!

Distance estimation in an ethological task (eLife) and visual coding during free movement (Neuron).

University of Oregon news coverage here.

Our Research

How do we make sense of the visual world around us? Our brain takes a pattern of photons hitting the retina, and continually creates a coherent interpretation of what we see – detecting objects and landmarks – rather than just perceiving an array of pixels. This image processing allows us to perform a range of visual tasks, such as recognizing a friend’s face, finding your way to the grocery store, and catching a frisbee. However, how these computational feats are achieved by the neural circuitry of the visual system is largely unknown. Furthermore, visual processing does not occur in isolation, but depends on behavioral state, task demands, and interaction with the world.

Our research is focused on understanding how neural circuits perform the image processing that allows us to perform complex visual behaviors, and how these circuits are refined by development and experience. We use in vivo recording techniques including high-density extracellular recording and widefield / two-photon imaging, along with molecular genetic tools to dissect neural circuits. We have also implemented behavioral tasks for mice so we can perform quantitative psychophysics to measure the animal’s perception, and we use theoretical models to understand general computational principles being instantiated. Recently, we have extended these approaches to study visual perception in the context of natural behaviors and complex environments, in an effort to understand how the visual system functions in real-world conditions. We are also studying the octopus visual system, to understand how a completely different brain architecture processes visual information.

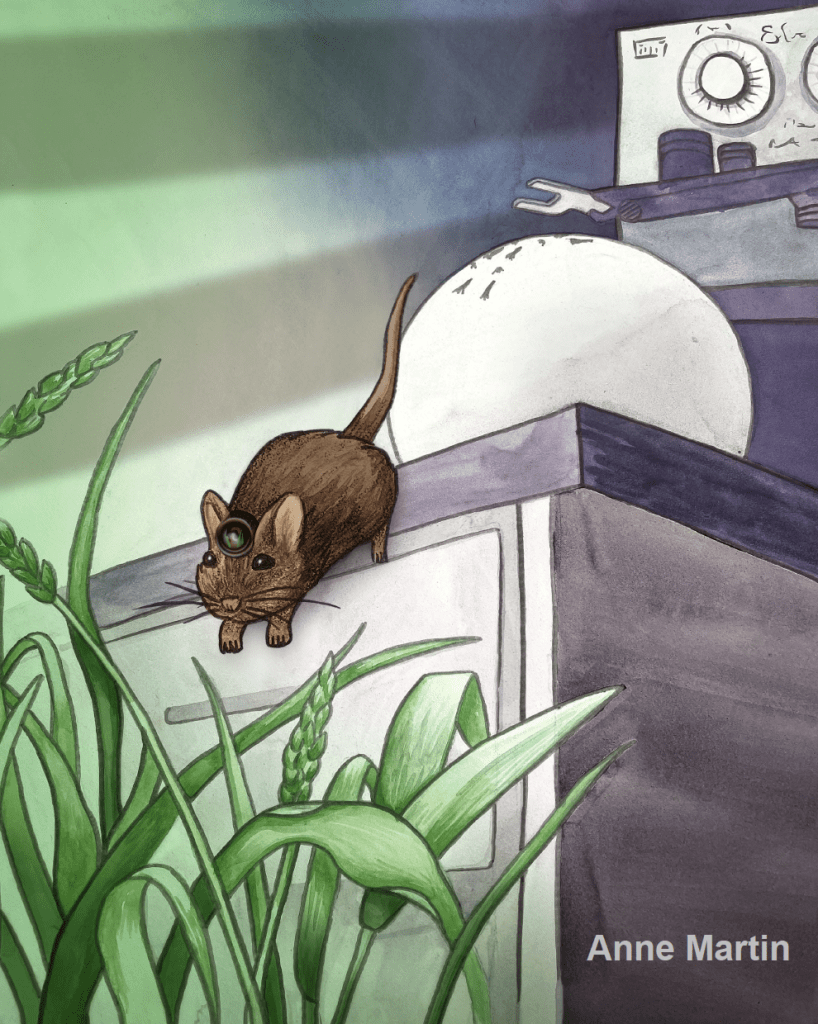

Left: Mice use vision to pursue and capture crickets, providing an ethological behavioral paradigm to study vision. Middle: Two-photon calcium imaging of neural activity in mouse visual cortex. Right: A juvenile Octopus bimaculoides feeding on brine shrimp.

In this lab, we believe that science should be supportive and inclusive.

Everyone is welcome regardless of race, background, or beliefs.

We advocate strongly for students and scientists from under-represented and disadvantaged groups.